Quick Summary: AI porn refers to sexually explicit images, videos, or audio created using artificial intelligence tools rather than filmed with real people. According to MIT research, 96% of deepfake videos are pornography, nearly all involving women without their consent. These AI systems use machine learning models to generate synthetic adult content, raising serious ethical, legal, and social concerns about consent, misuse, and the proliferation of non-consensual intimate imagery.

The internet changed pornography forever. Now artificial intelligence is doing it again.

AI-generated pornography has exploded from a fringe technology into a widespread phenomenon that’s reshaping adult content, threatening privacy, and forcing lawmakers to scramble for solutions. But what exactly is AI porn, and why should anyone care?

Here’s the thing: this isn’t just about adult entertainment anymore. The same technology creating fantasy scenarios is being weaponized to create non-consensual intimate imagery of real people—celebrities, classmates, colleagues, anyone with photos online.

Understanding AI Porn: The Basics

AI porn encompasses any sexually explicit content created or manipulated using artificial intelligence algorithms. That includes everything from completely synthetic characters to realistic deepfakes of real people.

The technology relies on machine learning models trained on vast datasets of images and videos. These systems learn patterns, features, and relationships in visual data, then generate new content that mimics those patterns.

Two main categories dominate the landscape:

- Synthetic generation: Creating entirely fictional people and scenarios from scratch

- Deepfakes: Swapping real people’s faces onto existing pornographic content or creating fake videos of actual individuals

The distinction matters. Synthetic content raises ethical questions about objectification and unrealistic standards. Deepfakes raise questions about consent, privacy, and abuse.

How the Technology Actually Works

Most AI porn generators use one of several approaches. Generative Adversarial Networks (GANs) pit two neural networks against each other—one creates images, the other judges them. Through millions of iterations, the generator learns to create increasingly realistic outputs.

Diffusion models take a different path. They start with random noise and gradually refine it into coherent images based on text prompts or reference images. This approach powers many of the newest generators.

For deepfakes specifically, face-swapping algorithms map facial landmarks from source footage onto target videos. The AI tracks expressions, lighting, and angles to maintain consistency across frames.

Here’s where it gets concerning: these tools have become remarkably accessible. What once required technical expertise and expensive hardware now runs on consumer devices. Some platforms offer simple text-to-image interfaces where users type descriptions and receive explicit images seconds later.

A Simple Way to Try AI Girlfriend

With Cherrypop.ai, you don’t need to think about setup or tools. You just pick a character, make a few changes if you want, and start chatting.

It’s a straightforward way to get a feel for how AI conversations behave without overcomplicating things.

Just Want to Try It Out?

Use Cherrypop.ai to:

- chat with ready-made companions

- create your own versions

- switch between different styles

👉 Join free on Cherrypop.ai to try it yourself

The Scale of the Problem

The numbers don’t just point to a growing issue – they show something speeding up quickly. Research referenced by MIT Sloan School of Management highlighted how fast deepfake content was already spreading, and that momentum hasn’t slowed down.

What stands out most is how that content is distributed. Findings from Deeptrace showed that the vast majority of deepfake videos are pornographic, and nearly all of those cases involve women, often without their knowledge or consent. That pattern still shapes the conversation today.

By early 2026, the scale has shifted again. Industry reports suggest deepfake content has grown by around 500% since 2024. Earlier projections estimated a jump from about 500,000 pieces of content in 2023 to around 8 million by 2025. Some larger figures circulate, but they usually refer to all synthetic media, not just videos.

Research from Harvard University shows how quickly this material spreads once it appears online. Views can build fast, especially on large platforms, which makes the impact harder to contain.

Behind all of this, the reality is simple. These numbers reflect real people whose images are used without permission. The scale keeps growing, but the human impact stays the same.

Synthetic Media on Social Platforms

Harvard’s analysis of platform X revealed that synthetic content prevalence peaked in March 2023 following the release of Midjourney V5. After a subsequent decline, the rate stabilized at around 0.2% of all community notes.

That might sound small. But context matters—0.2% of a platform with hundreds of millions of daily posts translates to massive absolute numbers.

The research found that the majority of identified synthetic content was non-political in nature. The research identified concerning deepfakes targeting political figures as a smaller but significant portion, demonstrating that the technology serves both entertainment and manipulation.

How AI Porn Generators Work

Most AI porn generators follow a similar pattern. Users input text prompts describing desired content, select style preferences, and adjust parameters like image resolution or artistic style.

The AI processes these inputs through trained models that understand relationships between words and visual elements. Within seconds to minutes, depending on complexity and queue times, the system outputs generated images or videos.

Some platforms offer additional features:

- Reference image uploading to guide style or appearance

- Negative prompts to exclude unwanted elements

- Resolution upscaling for higher quality outputs

- Batch generation to create multiple variations

- Video generation from static images or text descriptions

Pricing typically follows tiered structures. Free tiers offer limited daily generations at lower resolutions. According to industry sources, mid-tier plans increase generation limits significantly (200-500 images per month) and add higher resolution options. Premium creator tiers unlock unlimited or very high generation limits (1000+ images per month), HD video generation, faster queues, and advanced customization features.

Check specific platforms’ official websites for current pricing, as subscription costs and features change frequently.

The Consent Crisis

Here’s where the ethical foundation collapses. The vast majority of people depicted in AI-generated deepfake pornography never consented to their likeness being used this way.

The technology enables anyone with photos of a person—pulled from social media, yearbooks, professional profiles, anywhere—to create explicit imagery. Victims often don’t know these images exist until they surface online or someone sends them directly.

The psychological impact is devastating. Victims report anxiety, depression, reputational damage, and harassment. Some lose jobs or educational opportunities when fake imagery spreads.

And the law has struggled to keep up. Until recently, many jurisdictions had no specific statutes addressing non-consensual deepfake pornography.

Recent Legislative Action

Congress passed the Take It Down Act in early 2025 to address this gap. The bipartisan legislation, which passed the House overwhelmingly and the Senate unanimously, enacts stricter penalties for distributing non-consensual intimate imagery, including AI-generated deepfakes.

According to reporting from Senator Klobuchar’s office, President Trump signed the Take It Down Act in May 2025, and it took effect immediately. The measure targets both traditional revenge porn and AI-generated deepfake nudes.

Individual states have moved faster. Texas enacted criminal consequences for AI-generated pornography. Other jurisdictions followed with similar measures.

But enforcement remains challenging. Content spreads globally within hours. Platforms hosting this material often operate in jurisdictions with minimal regulation. Victims face an exhausting game of whack-a-mole trying to remove content that keeps resurfacing.

Platform Moderation Challenges

Social media platforms face enormous pressure to prevent the spread of AI-generated pornography, particularly non-consensual imagery. The results have been mixed.

After Elon Musk purchased Twitter (now X) in 2022, he claimed that “removing child exploitation is priority #1.” Yet in January 2026, AI-generated pornography flooded the platform when X’s AI chatbot Grok reportedly turned prompts into explicit content.

The New Yorker reported on the incident, noting that the technology brought millions of eyeballs to the site even as it raised serious questions about content moderation and platform responsibility.

The Federal Trade Commission weighed in on broader AI moderation challenges. In a June 2022 report, the FTC warned about using artificial intelligence to combat online problems, expressing concern about AI harms including inaccuracy, bias, discrimination, and commercial surveillance creep.

The problem? AI-generated content detection is an arms race. As detection improves, generation techniques evolve to evade detection. What works today might fail tomorrow.

Who’s Most Affected

Women bear the brunt of AI porn abuse. The 2019 Deeptrace study found that nearly all deepfake pornography involves women, and that pattern appears to have continued.

But the victim profile has expanded:

- Celebrities and public figures: High-profile targets with abundant online imagery

- Students and young people: Increasingly targeted with fake nudes shared among peers

- Ex-partners: Victims of revenge porn using new AI tools

- Professionals: People whose careers suffer when fake imagery surfaces

- Minors: Disturbingly, children whose photos are manipulated into explicit content

The last category represents a particularly alarming development. Existing child exploitation material gets combined with AI tools to create new illegal content. Law enforcement agencies worldwide have sounded alarms about this trend.

Technical Detection Methods

Researchers and platforms have developed various approaches to identify AI-generated content. These include:

| Detection Method | How It Works | Limitations |

|---|---|---|

| Artifact Analysis | Identifies visual inconsistencies like unnatural blurring, asymmetry, or distorted backgrounds | Advanced generators minimize artifacts |

| Metadata Examination | Checks file properties for generation signatures | Easily stripped or falsified |

| AI Classifiers | Machine learning models trained to distinguish real from synthetic | Accuracy decreases as generators improve |

| Biological Signals | Analyzes blinking patterns, pulse detection in facial videos | Only works for videos, being replicated by AI |

| Blockchain Verification | Cryptographic proof of authentic content provenance | Requires adoption across platforms and devices |

None of these methods offers perfect reliability. The technology evolves faster than detection capabilities.

The Authenticity Problem

Here’s a darker implication: as AI-generated content becomes indistinguishable from reality, it erodes trust in all media. When anyone can create convincing fake imagery, how do we trust anything?

This extends beyond pornography. Political deepfakes, fraud schemes using fake videos, and manufactured evidence in legal proceedings all become easier. The synthetic media problem threatens the foundation of verifiable truth.

The Business of AI Porn

AI porn has become a significant commercial enterprise. Platforms offering generation tools monetize through subscription tiers, per-generation credits, and premium features.

Some platforms position themselves as adult entertainment alternatives, creating synthetic performers for fantasy scenarios without involving real people. Advocates argue this reduces exploitation in traditional adult content production.

Critics counter that it normalizes objectification, creates unrealistic body standards, and trains AI models on content that may not have been ethically sourced.

The economic incentives are substantial. Adult content consistently drives early adoption of new technologies, from VHS to streaming video to virtual reality. AI generation is no exception.

Psychological and Social Impact

The proliferation of AI porn raises concerns beyond individual victims. Researchers worry about broader social effects:

- Normalized sexual objectification and unrealistic physical standards

- Desensitization to increasingly extreme content

- Reduced empathy toward real people depicted in intimate imagery

- Erosion of trust in authentic relationships and media

- Impact on developing sexuality among young people exposed to synthetic content

Long-term studies on these effects remain limited. The technology has outpaced research capacity to understand consequences.

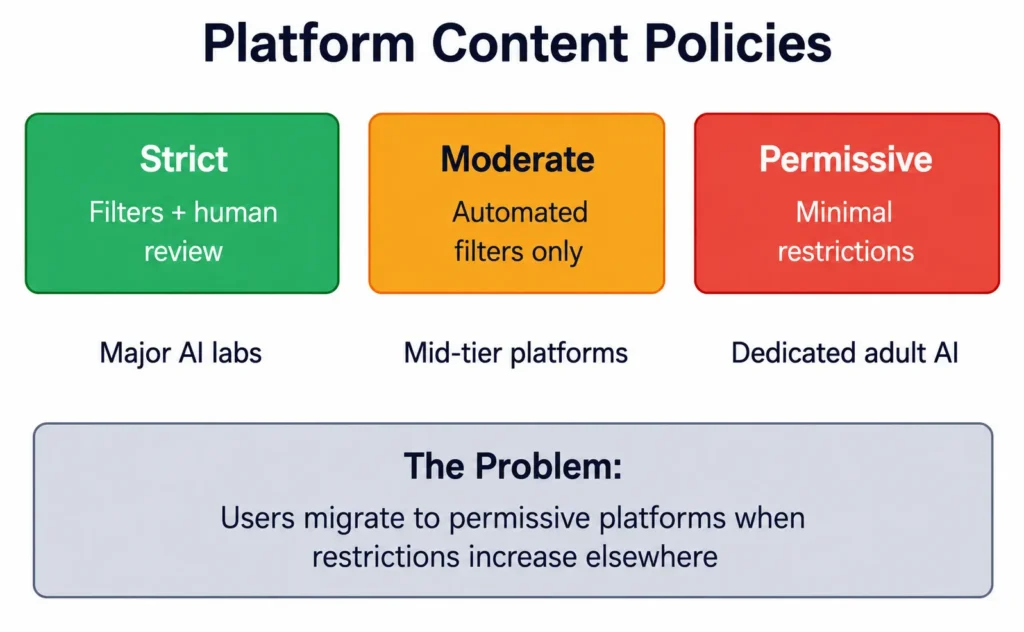

What Platforms Are Doing

Major AI companies have implemented various safeguards, with mixed results. Many text-to-image generators include content filters that reject prompts requesting explicit imagery or depicting real people.

But enforcement is inconsistent. Users quickly discover workarounds through prompt engineering—using euphemisms, code words, or layered requests that bypass filters.

Some platforms operate with minimal restrictions, explicitly catering to adult content generation. These services often host in permissive jurisdictions and accept cryptocurrency to avoid payment processor restrictions.

Protecting Yourself

While no method guarantees complete protection, certain steps reduce risk of becoming a deepfake target:

- Limit high-resolution facial photos online: Fewer clear images make realistic deepfakes harder to create

- Adjust social media privacy settings: Restrict who can view and download photos

- Use reverse image search periodically: Check if your photos appear in unexpected contexts

- Watermark professional photos: Makes unauthorized use more traceable

- Consider deepfake detection services: Some platforms monitor for unauthorized use of your likeness

For public figures and professionals with significant online presence, complete image control isn’t realistic. The focus shifts to rapid response when fake content surfaces.

If You’re Victimized

Discovering non-consensual AI-generated imagery of yourself is traumatic. Take these steps:

- Document everything: screenshots, URLs, timestamps

- Report to the hosting platform through abuse/DMCA processes

- Contact the Cyber Civil Rights Initiative or similar organizations for support

- Consult with an attorney familiar with digital privacy law

- File a police report if the content involves minors or violates local laws

- Consider working with reputation management services for removal

The Take It Down Act provides new legal recourse in the United States. Similar legislation exists or is under consideration in other jurisdictions.

The Future of AI Porn

Technology will continue advancing. Video generation quality improves monthly. Real-time deepfakes already exist in prototype. Interactive AI-generated content is emerging.

Some developments on the horizon:

- Personalized AI companions: Chatbots with visual components for synthetic relationships

- Virtual reality integration: Immersive environments with AI-generated participants

- Voice synthesis combination: Matching visual deepfakes with cloned voices

- Real-time generation: Creating content during video calls or streams

Each advancement raises the stakes for consent, privacy, and authenticity.

Regulatory Responses

Governments worldwide are grappling with regulation. Approaches vary:

| Jurisdiction | Regulatory Approach | Status (2026) |

|---|---|---|

| United States | Federal Take It Down Act plus state-level laws | Active enforcement beginning |

| European Union | AI Act includes deepfake disclosure requirements | Implementation phase |

| United Kingdom | Online Safety Bill addresses synthetic intimate imagery | Ongoing refinement |

| Australia | eSafety Commissioner granted takedown powers | Active but limited scope |

| South Korea | Criminal penalties for deepfake creation and distribution | Strict enforcement |

Enforcement remains the challenge. Cross-border content distribution, anonymous platforms, and jurisdictional limitations complicate prosecution.

Industry Self-Regulation Efforts

Some AI companies have formed coalitions to address synthetic media harms. Initiatives include content authentication standards, watermarking protocols, and shared blocklists of known abusers.

But participation is voluntary. Companies operating explicitly in adult content generation have little incentive to restrict their core business model.

The tension between free expression, adult content rights, technological capability, and protection from abuse remains unresolved. No consensus exists on where lines should be drawn or who should draw them.

Frequently Asked Questions

The legality depends on jurisdiction and specific circumstances. Creating purely synthetic content of fictional characters is generally legal in most places. However, AI-generated pornography depicting real people without consent is illegal under the Take It Down Act in the United States and similar laws in other countries. Content depicting minors, whether real or synthetic, is illegal virtually everywhere. Laws continue evolving as technology advances.

Detection has become increasingly difficult as generation quality improves. Early deepfakes showed obvious artifacts like unnatural blurring, incorrect lighting, or facial distortions. Modern AI-generated content often appears indistinguishable from real photos or videos to the human eye. Specialized detection software can identify some synthetic media, but accuracy varies and decreases as generation techniques evolve. When in doubt, assume any explicit content could be manipulated.

Document the content immediately with screenshots and URLs. Report it to the hosting platform through their abuse reporting system. Under the Take It Down Act, platforms must remove non-consensual intimate imagery including deepfakes. File a police report if the content violates criminal laws. Contact organizations like the Cyber Civil Rights Initiative for support and resources. Consult an attorney familiar with digital privacy law to explore civil remedies. Act quickly, as content spreads rapidly online.

AI porn generators use machine learning models trained on large datasets of images. Most employ either Generative Adversarial Networks (GANs) or diffusion models. Users input text descriptions or reference images, and the AI generates new explicit content matching those parameters. Some platforms specialize in face-swapping, mapping one person’s face onto existing adult content. The technology has become accessible through simple interfaces requiring no technical expertise, lowering barriers to creation and misuse.

According to the 2019 Deeptrace study, nearly all deepfake pornography involves women. This reflects broader patterns of technology-enabled abuse and gender-based harassment. Women, particularly those in public life, face disproportionate targeting for intimate image abuse. The combination of readily available photos online, societal objectification, and revenge motivations creates conditions where women become primary targets. The power dynamic inherent in non-consensual pornography serves to humiliate, control, and harm victims.

Shutting down AI porn platforms faces significant challenges. Many operate in jurisdictions with minimal regulation or enforcement. Platforms accepting cryptocurrency and hosting through decentralized services evade traditional legal pressure. When one platform closes, others quickly emerge. Law enforcement focuses primarily on platforms distributing illegal content like child exploitation material. Platforms generating legal adult content, even if ethically questionable, receive less regulatory attention. Effective control requires international cooperation and updated legal frameworks that don’t yet exist.

AI porn is the broader category encompassing all sexually explicit content created using artificial intelligence. This includes entirely synthetic people and scenarios. Deepfakes are a specific subset involving manipulation of real people—typically face-swapping technology that places someone’s likeness onto existing pornographic content or creates fake videos of actual individuals. All deepfakes are AI porn, but not all AI porn involves deepfakes. The distinction matters legally and ethically, as deepfakes raise consent issues that purely fictional synthetic content may not.

Moving Forward

AI porn represents a fundamental collision between technological capability and ethical responsibility. The tools exist and will continue improving. The question isn’t whether AI can generate explicit content—it demonstrably can. The question is how societies balance innovation, expression, and protection from harm.

For individuals, awareness is the first defense. Understanding the technology, its capabilities, and its risks enables better decisions about online privacy and image sharing.

For platforms, the challenge is developing effective moderation that prevents abuse without becoming oppressive censorship. That balance remains elusive.

For lawmakers, the imperative is creating enforceable regulations that protect victims while respecting legitimate uses of generative AI. The Take It Down Act represents progress, but enforcement will determine effectiveness.

For society broadly, the deeper question persists: as synthetic media becomes indistinguishable from reality, how do we maintain trust in what we see and hear?

The technology won’t be uninvented. The challenge ahead is learning to coexist with it while protecting the vulnerable from exploitation and abuse.

Stay informed about AI developments, protect your digital privacy, and support legislation that holds creators and platforms accountable for non-consensual content. The decisions made now will shape the relationship between artificial intelligence and human dignity for decades to come.